Why search relevance matters

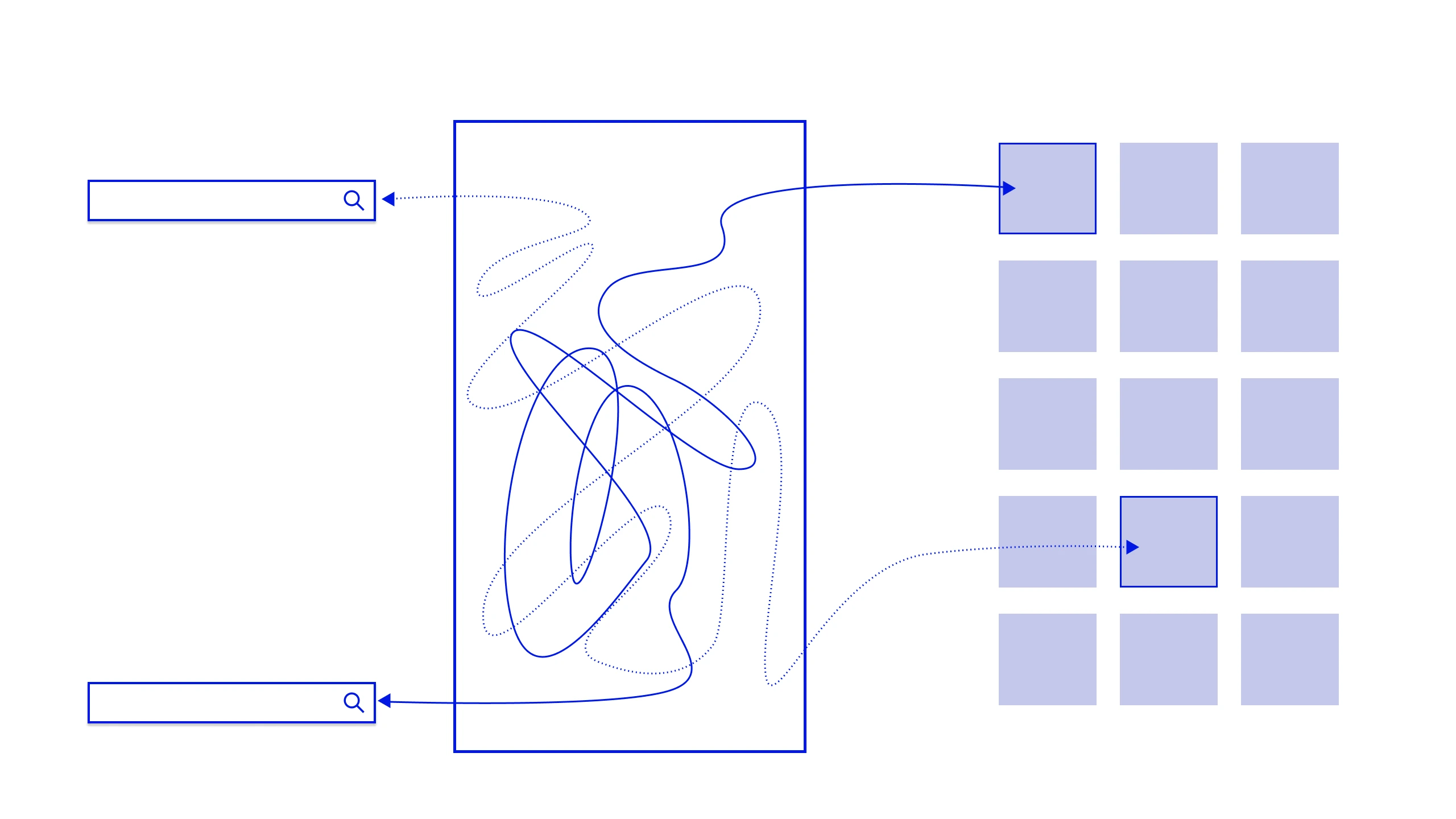

Machine learning models powering search engines and recommendation systems depend heavily on well-labeled, high-quality training data. However, algorithms alone can't fully capture human intent. What seems "relevant" to a user is influenced by language nuances, cultural context, and individual preferences.

Our process-driven services bridge this gap by:

Training ML models with quality data: Ensuring that query-result pairs reflect real-world user expectations.

Human-centered judgments: Using domain-trained annotators to evaluate whether results are truly relevant.

Continuous feedback loops: Feeding human insights back into your ML systems to improve performance over time.

What we deliver

Every service is built around one idea: human judgment, structured and scaled into training data your models can actually learn from.

Search query intent labeling

We classify queries by intent type — navigational, informational, transactional, comparative — and annotate for modifiers like urgency, specificity, and ambiguity. Labeled intent data is the foundation of query understanding models and query rewriting systems.

Product & content catalogue annotation

Attributes, categories, synonyms, facets, and relevance tags applied to your catalogue by trained specialists. Clean, consistent catalogue annotation is what separates a search engine that surfaces the right SKU from one that serves the best-selling one.

Personalization signal labeling

We structure and label behavioural signals — clicks, dwells, skips, purchases, returns — to train collaborative filtering and session-based recommendation models. Human reviewers audit edge cases and cold-start items where signal data alone is insufficient.

Ranking model evaluation (HITL)

Before you ship a new ranking model, our evaluators run structured blind comparisons — SERP A vs SERP B — across statistically representative query sets. You get human preference data and inter-rater reliability scores to validate ranking improvements before they reach production.

Ongoing relevance monitoring

Relevance isn't a one time project. As your catalogue changes and user behaviour evolves, we run regular rejudging cycles, surface degradation signals, and supply fresh training data on a cadence that matches your retraining schedule.

Why teams choose DATACLAP for search & personalization data

The difference between a good search model and a great one is the quality and specificity of its training signal. That's what we provide.

Domain-trained annotators

Relevance is context dependent. A query like "python" means something different on a developer tools platform than a pet supplies site. We train annotators specifically on your domain, catalogue, and user intent patterns before a single judgment is made.

Rubric design included

Most teams don't have a relevance grading rubric — or have one that's too vague to produce consistent labels. We work with you to define a rubric that maps to your business goals, then train annotators to apply it consistently across locales and query types.

Inter-annotator agreement tracking

Label consistency is measured on every batch. We track inter-annotator agreement (Cohen's Kappa or Krippendorff's Alpha) and flag annotator drift early — so your training data stays reliable as projects scale.

Multi-language & multi locale support

Search relevance varies by market. We provide native language annotators for your key locales — not machine-translated instructions applied to bilingual generalists — so relevance judgments reflect how real users in that market actually search.

Seamless retraining integration

Labeled data is delivered in the format your pipeline expects — CSV, JSON, JSONL, or direct API — on a schedule that aligns with your retraining cadence. Fresh human signal, ready to ingest.

GDPR compliant, ISO 27001 ready

Search logs and user behaviour data are sensitive. All annotation workflows run under strict access controls, encrypted transfer, and data handling agreements that meet your compliance requirements.

Industries we serve

Search and personalisation look different in every industry — so does the training data that powers them.

Better training data. Smarter models. More relevant results.

Tell us your query volumes, locales, and retraining cadence — we'll scope a human judgment program around them.